Let me ask you something.

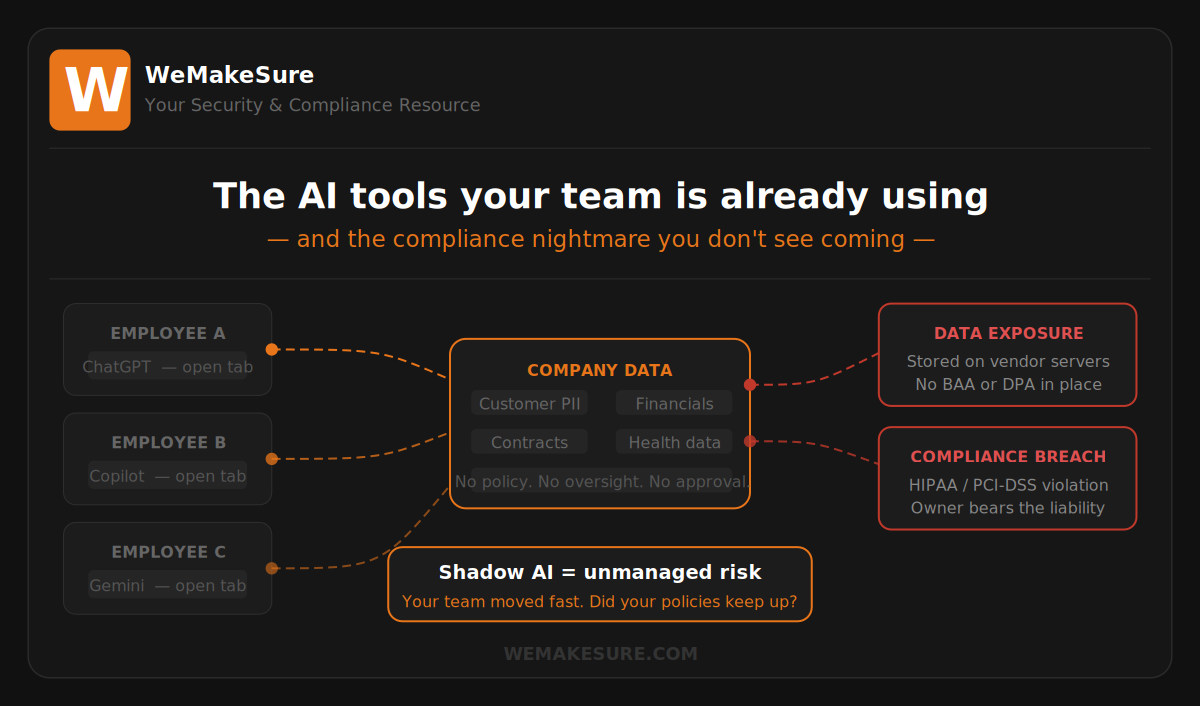

Do you know every AI tool your team used this week?

Not the ones you approved. Not the ones in your IT inventory.

All of them.

Because right now, someone on your team has probably used ChatGPT to draft a client email. Someone else ran a contract through an AI summarizer. Your bookkeeper might have used an AI assistant to reconcile accounts — pasting in figures they probably shouldn’t have shared outside your walls.

They weren’t trying to cause a problem.

They were trying to get their work done.

But here’s the compliance reality: intent doesn’t matter to a regulator. Data exposure is data exposure, whether it was accidental or not.

Shadow AI is the new shadow IT

Remember shadow IT? The era when employees were installing Dropbox, Google Drive, and Slack without telling anyone — and IT teams were scrambling to figure out where company data had ended up?

We’re in that moment again. Except this time, it’s AI — and it moves faster, touches more data, and carries compliance exposure that most small business owners haven’t thought through yet.

Tools like ChatGPT, Microsoft Copilot, Google Gemini, Grammarly Business, Notion AI, and dozens of others are being adopted at the team level — not the leadership level. No procurement process. No legal review. No data handling agreement. Just a free trial, a browser tab, and a whole lot of company information flowing somewhere you didn’t sanction.

Large enterprises have compliance teams catching this. Most small businesses don’t. Which means the exposure lands directly on you.

What’s actually at risk

When an employee pastes customer data, a contract, or a financial record into an AI tool, a few things can happen — none of them in your favor:

- That data may be stored on the AI provider’s servers, sometimes used for model training, depending on the tool and whether a business agreement is in place.

- If you’re subject to HIPAA, PCI-DSS, or state privacy laws like CCPA, sharing protected data with an unauthorized third-party tool is a potential violation — full stop.

- If that AI tool is breached or has a data incident, your customer data could be exposed, and you may have no contractual recourse because you never entered a Business Associate Agreement or Data Processing Agreement.

- AI tools can generate confident-sounding but incorrect legal, financial, or compliance guidance. If someone on your team acts on that guidance without checking, you own the mistake.

This isn’t hypothetical. It’s happening in small businesses every day.

Why this is a people problem first

Here’s what I want you to resist: the urge to frame this as a technology problem.

You can block AI websites at the firewall level. You can lock down browser extensions. You can write a policy and call it done.

None of that will work in the long term — because your employees aren’t doing this to circumvent your policies. They’re doing it because they want to do their jobs well. AI tools make people faster, more confident, and frankly, better at certain tasks. That instinct isn’t the enemy.

The problem is that no one has told them what the rules are. Not because you’re negligent — but because most small businesses haven’t built AI governance yet. It’s new territory for everyone.

And when there are no rules, people make reasonable-looking decisions that create unreasonable risk.

Three things you can do this week

You don’t need a full AI governance framework before you act. Here’s where to start:

1. Find out what’s already being used.

Ask your team — without judgment — which AI tools they’re currently using and what they’re using them for. You’ll likely be surprised. This isn’t an audit; it’s a conversation. The goal is visibility, not punishment. You can’t manage what you don’t know about.

2. Establish a simple “green light / red light” policy.

You don’t need legal prose. You need clarity. Tell your team: here are the AI tools we’ve approved (green light), here’s what data you should never put into any AI tool (red light), and here’s how to ask if you’re unsure (escalation path). One page. Plain language. Posted somewhere they’ll actually see it.

3. Review the terms and data policies of any AI tools you do approve.

Most business-tier AI subscriptions include data processing agreements and commit not to train on your data. Most free-tier tools won’t. Know which tier you’re on. If you’re in a regulated industry — healthcare, finance, legal — make sure any approved AI tool has the right contractual protections in place before your data goes near it.

The bottom line

AI adoption isn’t slowing down. Your team will keep finding tools that make their jobs easier — and that’s a good thing.

But right now, most small businesses have a gap: their employees are ahead of their policies. That gap is where compliance exposure lives.

The goal isn’t to slow your team down. It’s to make sure that when they move fast, they don’t drag your company into a compliance problem that could have been prevented with one conversation.

That conversation starts with you.

—

Need help building an AI use policy for your small business? Reach out to the We Make Sure team — we help organizations navigate exactly this kind of emerging compliance challenge.

wemakesure.com